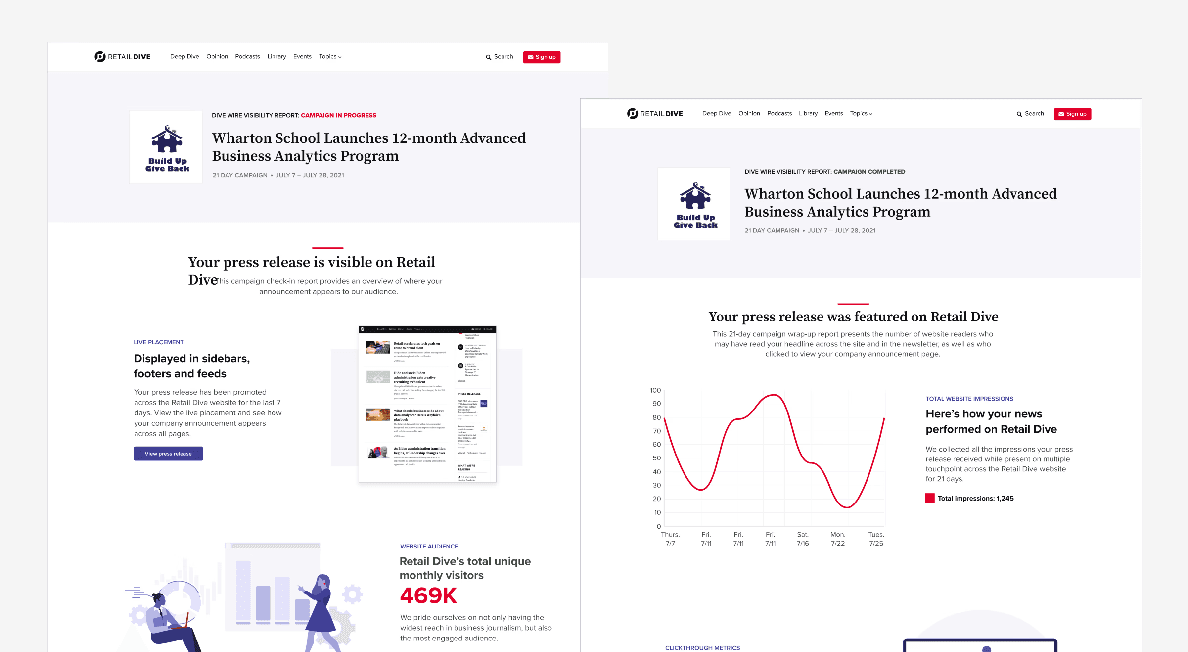

DiveWire Visibility Report

Created a Visibility Report that proves DiveWire performance with clear audience reach metrics. Shipped a static v1, then scaled to dynamic 7-day and 21-day reporting as data became available—driving repeat purchases and contributing to revenue growth.

Client

Industry Dive

Services

Product Strategy

Revenue Impact

Data Visualization

Duration

12 weeks - Incremental delivery across multiple releases

At a glance

• Problem: DiveWire customers lacked clear proof of visibility after publishing, which created uncertainty about value and weakened repeat purchase confidence.

• Role: Led product design for the Visibility Report (UX strategy, information design, reporting model, and cross-team alignment).

• Solution: A Visibility Report that surfaces key audience reach metrics in a simple, trust-building format—delivered in phases from static v1 to dynamic 7-day and 21-day reporting.

• Validation: Iterated through customer feedback, stakeholder review, and performance signals as data infrastructure matured.

• Impact: Reduced customer uncertainty by making results tangible and comparable across time windows—supporting retention and repeat purchase decisions.

Summary

I created the DiveWire Visibility Report to answer the customer’s most important post-purchase question: “Did anyone actually see my release?” Because dynamic performance data didn’t exist initially, I shipped a static version first, then evolved the report into a dynamic experience as data became available—scaling it into 7-day and 21-day reporting views that made outcomes easier to trust and act on.

Top Metrics

• Audience reach visibility: reporting designed to represent distribution across Industry Dive’s ecosystem (14M readers, 29+ publications)

• Reporting scaled from static v1 → dynamic reporting → 7-day and 21-day views as data became available.

• Business outcome: improved confidence signals and supported repeat purchase behavior.

The Problem

Even when DiveWire delivered distribution, customers struggled to verify results. The experience left them asking:

Where did my announcement show up?

Did it reach the audience I paid for?

What impact did I get over time?

Two root issues drove the problem:

Lack of performance proof: Customers didn’t have a clear, centralized way to view audience reach metrics tied to their release, creating uncertainty about value.

No reporting foundation: The dynamic data required for real reporting wasn’t available initially, which meant a “fully baked” solution couldn’t ship on day one.

This uncertainty suppressed confidence and made repeat purchases harder to justify.

The Stakes

DiveWire is a paid product. If customers can’t clearly see results, they question the purchase—even if distribution occurred. The Visibility Report needed to:

strengthen post-purchase trust

reduce support burden driven by “where did it show?” questions

support retention and repeat purchase decisions by making value measurable

the impact

Over the course of a year, I led the end-to-end design of DiveWire’s Visibility Reporting suite—turning a basic press release distribution tool into a data-driven product that supports both retention and new customer conversion. I architected a modular reporting framework that translated campaign performance into client-ready proof of reach—impressions, placement views, and newsletter opens—then scaled it from an initial MVP to automated 7-day and 21-day reports that ship with every order. This made analytics an always-on product feature (not a one-off deliverable) and gave Sales a consistent performance narrative to sell and renew against.

Business impact

64% sales growth

$475K incremental revenue from repeat purchases

$108K revenue from first-time, self-serve package purchases

70% reduction in customer-service inquiries

Why it worked

The reports moved customers from “Did this run?” to “What reach did I earn—and what should I do next?”—increasing confidence, reducing support burden, and strengthening the renewal story with concrete performance evidence.